- News

- Technology News

- Tech News

- ChatGPT is not your therapist — Here's what that means for you

Trending

This story is from July 17, 2025

ChatGPT is not your therapist — Here's what that means for you

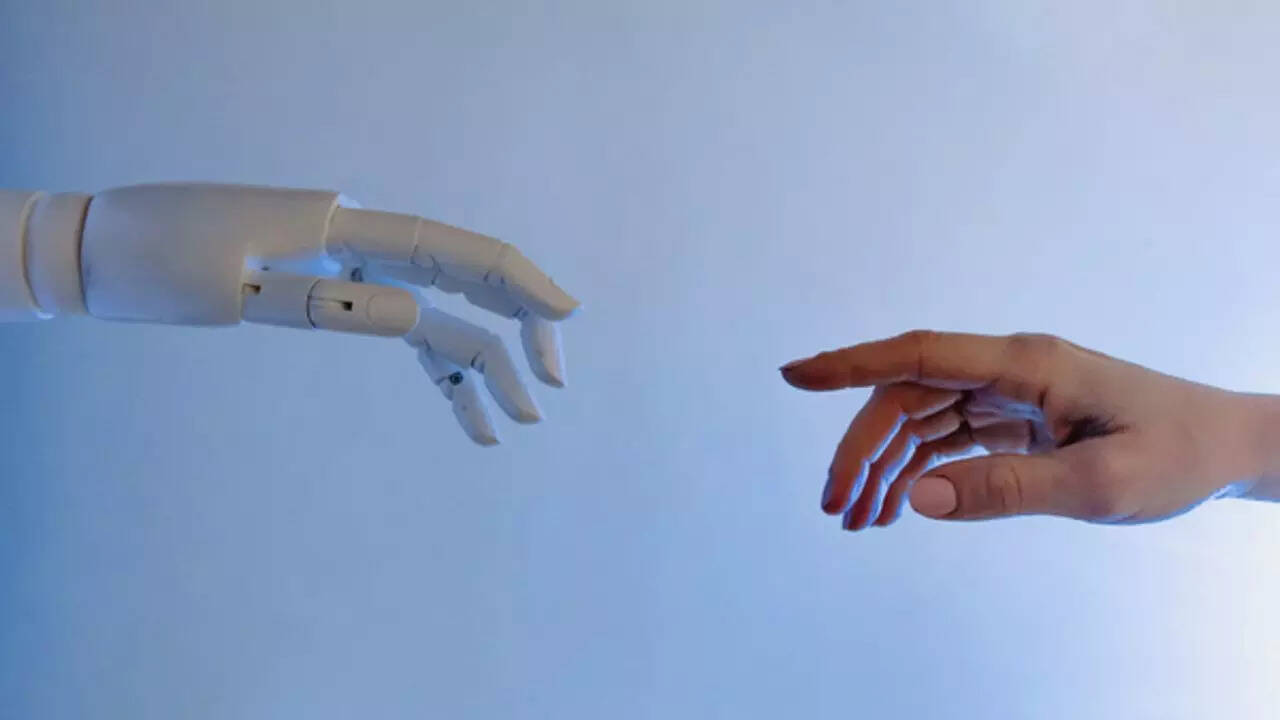

A recent study by researchers at Stanford University, highlights serious concerns about using AI tools like ChatGPT for emotional support. The researchers found that ChatGPT often fails to meet basic standards of therapy—it can unintentionally reinforce harmful thoughts, provide overly agreeable answers to serious mental health issues, or respond with stigma to sensitive topics like addiction or psychosis. In some cases, it even offered misleading reassurance in situations involving suicidal ideation. The conclusion? These tools aren’t built to handle the nuance, ethics, or responsibility required in mental health conversations. Using ChatGPT as a therapist might feel helpful in the moment, but it's no substitute for real, professional mental health support. With the rise of AI tools offering instant answers and emotional validation, it's easy to fall into the trap of offloading your feelings onto a chatbot. After all, it's free, always available, and won’t judge you. But here’s the truth: ChatGPT isn’t trained to handle mental health crises, trauma, or the deep complexities of human psychology. Relying on AI for emotional support can create a false sense of healing while leaving underlying issues untouched. Here's why it's risky — and what you should do instead.

Why you shouldn't treat ChatGPT as your therapist

ChatGPT isn’t a licensed professional

It may reinforce unhelpful thought patterns

No personalised treatment plans

It can miss signs of unwellness

You might delay getting real help

Privacy concerns still exist

Healing requires human connection

What you can use ChatGPT for instead

- Emotional vocabulary building

- Finding therapy resources near you

- Learning CBT techniques (with expert oversight)

- Setting goals or tracking habits

End of Article

Follow Us On Social Media