- News

- Technology News

- Tech News

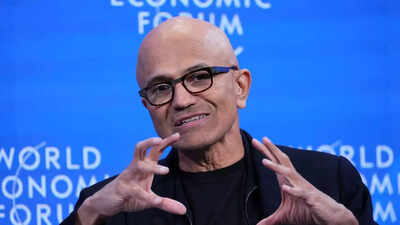

- How OpenAI is the reason why Microsoft CEO Satya Nadella is not ‘worried’ about Google’s AI capability

Trending

How OpenAI is the reason why Microsoft CEO Satya Nadella is not ‘worried’ about Google’s AI capability

Microsoft CEO Satya Nadella confirmed the company has access to all of OpenAI's intellectual property except consumer hardware, bolstering its AI capabilities against Google. While Microsoft's own Maia AI chip production faces delays until 2026, OpenAI is pursuing a multi-pronged chip strategy, including partnerships with Nvidia, AMD, and Google, alongside developing its own chips with Broadcom.

Microsoft CEO Satya Nadella said the company’s partnership with OpenAI gives it access to all of OpenAI's intellectual property except consumer hardware, providing Microsoft with AI capabilities that reduce concerns about Google’s competition in the field. OpenAI, on its part, has also struck deals with Nvidia, AMD and Google for AI chips, data centers and AI model training.During a podcast, Nadella explained the scope of Microsoft's access to OpenAI's technology. When asked if Microsoft has access to OpenAI's IP, Nadella responded: “In our case, the good news here is OpenAI has a program in which we have access to.” When pressed about whether Microsoft has access to all of it, Nadella confirmed: “All of it.”“So the only IP you don't have is consumer hardware?” the interviewer asked, to which Nadella responded, “That's it”. “By the way, we gave them a bunch of IPs as well to bootstrap them, right? So, this is one of the reasons why they had a mass because we built all these supercomputers together, and they benefited from it. Rightfully so,” Nadella added.

Microsoft’s Maia chip delay

OpenAI's chip strategy

End of Article

Follow Us On Social Media